Hi Psyche,

Continuing aside: I have really fussy ears, it’s a curse. I have played in orchestras, brass bands, rock bands jazz bleus etc. I also own about ten or so top orchestral librarioes. I have played in chrnological order, trombome, trumpet, cornet, guitars, flue, saxes, real Hammonds real Rhodes and so many keyboards I can’t count. The current state of sampling NEVER convices my ears.

There is a long discussion which we both understand, here is a couple of quick points. If you hit a drum skin you never get the same oscilloscopic reading back, ever. The placement of the stick, the tension of the skin, the room verb it’s never the same. One might not be able to consciously identify it, but one hears. Every real instrument has it’s character, legato for every instrument that can do it is different. On a trumpet, there is a sound which is a kind of slide between one valve position and another, whith no lipping. You can’t do this on a sax, or a harp. There are literally thousands of reasons why sampling in it’s current state does not work. Staccato in particular, makes me shiver, because it’s such a personal thing and dependent on the setting (think James Brown Horns, think Tchaikowsky Nutcracker) is horrible. Staccato is phrased and has different dynamics - yes you can alter this a bit.

PO: You know about this word? It was cointed by Edward De Bono, the lateral thinking guy. What it is is to entertain the ridiculous as a solution, on the way to a new solution. Briefly, in the scientific method it butts in. Assessment, develop hypothesis, make a method to test that hypothesis, run the test, if solution is not satisfactory, then develop new hypothesis and go around the cycle again. PO is the creative energy of randomness, applied, before the generation of new hypothesis. Po breaks all rules, Cubzase for example might become a dancing duck, the new Queen of Siberia or whatever you wish. Po is choosing a random frame of reference and applying it inappropriately, in order to discover new aspects of it’s target. It’s related ot brainstormi ng but is a more exact, defined tool. Using PO does not mean that you are not embarking on reasoning, if you build a house from lego bricks, there may be a stage where you dismantle stuff and lay it on the floor. Po is an integral part of the scientific method which is often overlooked.

One human failing that can effect a whole industry is “solution-blindness”. By this I mean that if a solution to a problem has already been found to be satisfactory, then thinking around other solutions dries up. When people first realised that Computers could type and print, the early solutions were to emulate paper, but later it was realised that they could do much more than a typwriter - check grammar, add pictures, include hyperlinks.

We now have AI, we have gaming tech, we have superfast CPU computing, we have language models, we have superfast GPUs. We are soon to have photonic computers, which compute about 1000 times faster. The chips are here.

Enough preamble:

Po:

PO: A computer can produce a visit to a concert hall by a conductor composer.

Now imagine a game, not a music device, a game. Uses GPU.

You walk into the room. You are Majestico, you are the great composer and conductor. All around you are musicians that you can select for your project. That’s Yehudi Menuhin sirtting in the corner.

Today though, You have decided that you want a jazz quintet.

You call forth the saxophonists. You have before you Stan Getz, Coltrane, Ben Webster, Parker etc. You feel in a latin mood, you gravitate towards Getz. You get him to play a run or two - a list of arpeggios comes to your convenience, you change his mouthpiece and harden the reed (which gives a softer sound) - now you have something to work with.

You look for a trumpet. You have purchased Gillespie, Chet Baker, Bix Biderbeck, Miles Davis. All the samples from each player have been curated from the net by AI. Well, you think, maybe Chet playing latin would be interesting…. you choose his mute.

Maybe you choose your bass by describing the qualities you desire: Subtle, fewer notes, latino sensitivities, emulating a Surdo. A guy appears to your requirements.

You choose your band.

Next you choose your concert hall. Which place? You get presented by clips of smokey Ronnie Scotts, The Royal Albert Hall, etc. You choose your venue (and its reverb) and you position your people on the stage. You get to choose mics and mic positions - these mics now being given an AI treatment - they are “realer”.

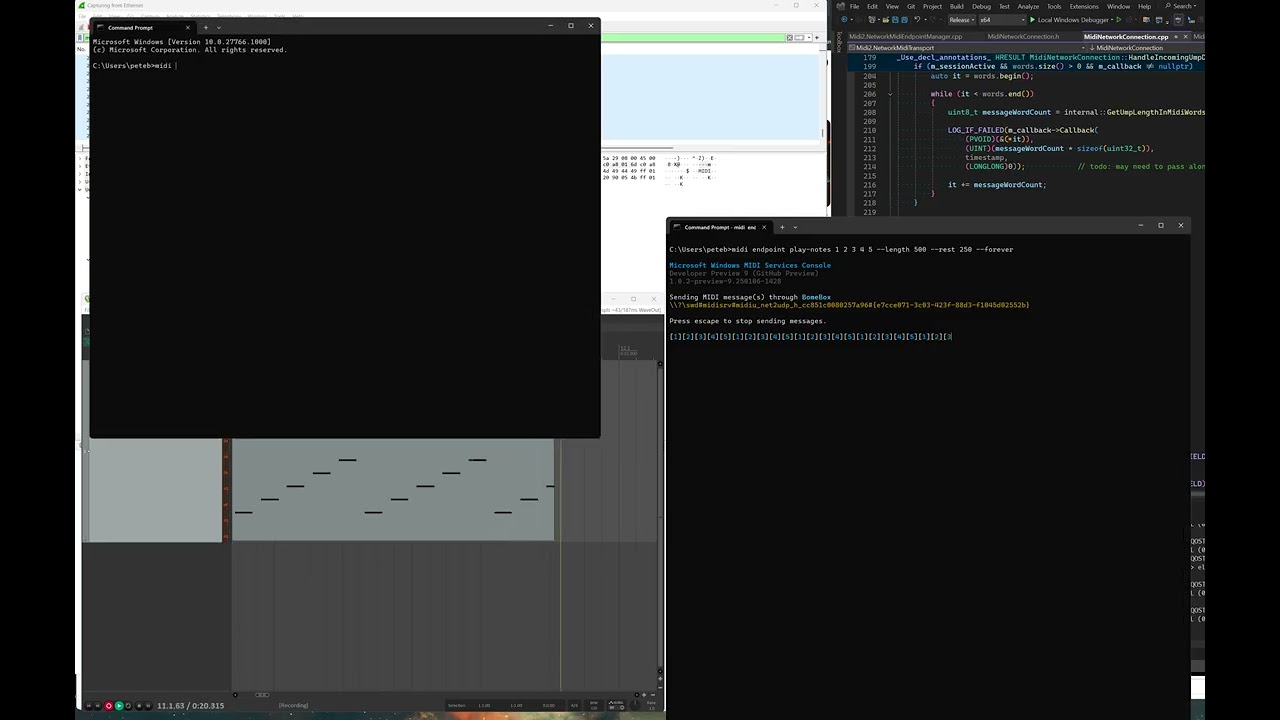

SO now you need music. Well you can simply import your notation, or you can click into a notation app like Dorico and do your business. Dorico can now be talked to. You can for example ask (create me a cuban latin style, 32 bars AABA, Key Db, tempo 85, use some swing and keep it dolce. You may not wish to do this, you may wish to creative your own lines, you also can ask AI to do this. You can write in manually. Dorico now needs less buttons as you can simply say “please accent all the quavers in bar nine and reverse their stems, please move them to voice 2”.

Cubase AI is also available. You can load a staff into C.AI and sample audiences clapping for example, if you so choose. Cubase is now able to talk to you too, it can trawl the internet for samples of clapping according ot your request “clapping in a big hall, with yelps”, or, if you wanted it different, “create a reverb from a bat cave”.

Being game based in it’s approach, one can enter a concert hall, view rooms, view notation, oscilloscopic views, key editors as one pleases.

This is my Po of the future of Cubase and the music industry., once we realise we do not have to stick with current “Charlie Chaplin era” technology and solutions.

People need ot think differently

Z