Hi @ctreitzell

I think we might be on diff pages/logic…my comparison is on of conceptual abstracts…ie what makes any software good/better. It was also underlyingly about the tools of sound that use the same graphic paradigm. I also used photoshop (and many graphics packages that were on SGI) for television work. Things like the frustration of masking…its probs just my siltation…

it just sounds like you are looking for automated results when I read this stuff from you.

No just putting stuff into mature tools but I admit thats my problem. I did a masters in UX/UI (Digital Media) and and love great tools; just having this forum opened me to the idea of using SplitS in a way that prob most dont but it is absolutely brilliant and Im thankful that the shortcomings of using SL/RX brought me to that. I found an end game solution.

So when Im presented with eg Debleed and it basically has no parameters to tweak things and make them better, nor even (that I could find) really clear examples…it leaves me wondering but seems like its not just me. Then when I go to online unmix it does have variations eg so I could use 1 engine that unmixes voice from the singer guitarist takes that drops the esses and run it again with a diff model that does and just merge them manually…but I dont have to play hide and seek.

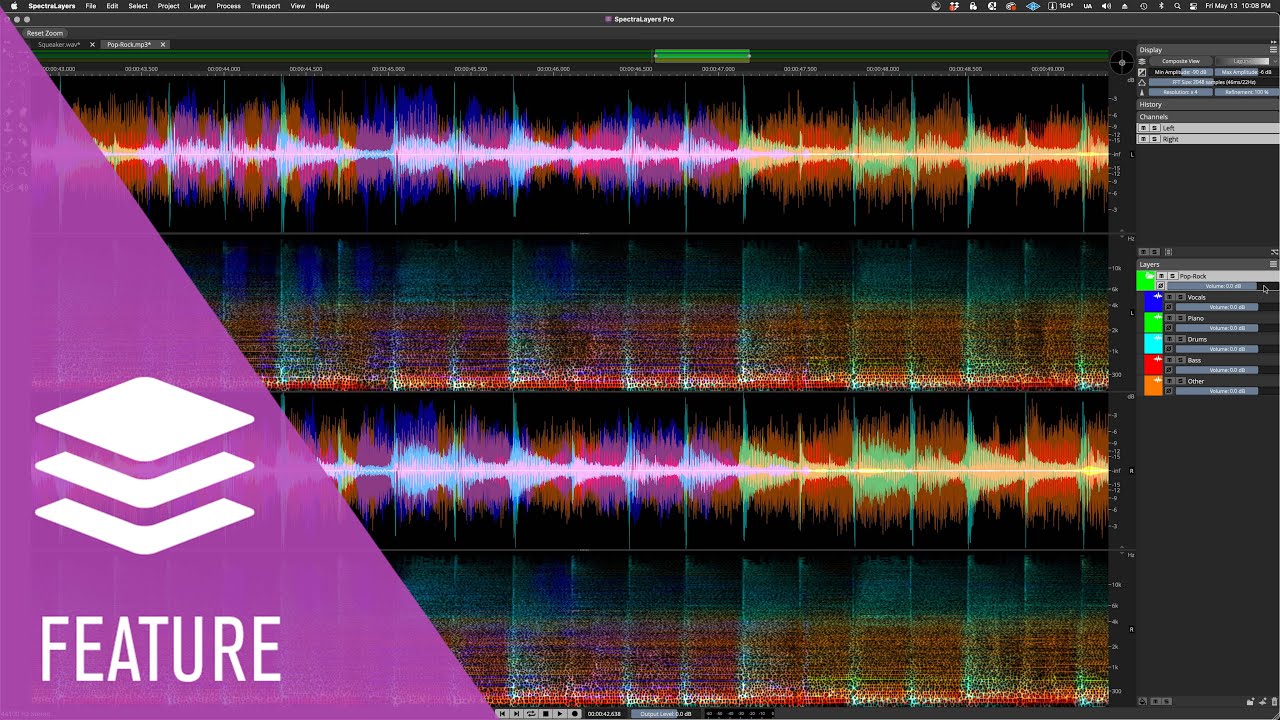

You are correct here. I think I was just let down by the particular toolset and outcomes that were marketed like the guy debleed drums…and then the instrument unmix. It just doesnt work ime in SL the way I had hoped and they were wrong understandings. Should have just got the trial and asked the basic questions up front.

Im not doing forsensics and I appreciate the potential but for music production in the way that Im doing it…its just the wrong tool but its not about spectral stuff eg I asked about phase rotation because I dabbled in some other packages but SL tool is a token gesture as its not adaptive phase and I left out a keyword so even that is prob not an important tool for many but its sooo important if dynamics is really important. Sure peeps make great records without it 40 years ago but in this ridiculous age long after loudness wars, peeps ear are trained for “loud” so every detail counts.

Im not doing AI music…part of our studio motto is “100% free of artificial ingredients” and we dont even accept any AI songs…I love handmade stuff…hehe…I just misunderstood the marketing of SL and my brain filled in the dots in a wrong way.

Seems like a great tool for forensic type stuff and soundtrack production but as for fluid musical use its not the right tool…for me but I will still keep trying to use it.

I shouldnt have upgraded though…gained some things and lost others…still no better off for debleed in SL but have worked out how to use LALAL and SL.

Yeah its true not just of transforms but sitting in front of a screen and mousing. thats why I spent quite a while on hardware and ux…even that being such a frustration with half baked stuff. I would jump on Reaper straight away but Im too old now and Cubase is baked in from over 35 years of use…but it gets the job done.

In summary, SL has helped me start the road of really getting a lot better mixes because of the power to get the sources so much better but ultimately that is answered in just good unmixing…I dont really need more than that.

Cheers Todd